100ms -> 40ms -> 1ms: Maximizing response caching in Laravel

How to take (some) response times from 100ms to 40ms to 1ms.

All the tactics below are based on my site — lean-admin.dev deployed on Laravel Vapor. The site has a landing page, pricing page, docs pages (Laravel-powered), and a user dashboard.

As always, YMMV.

Caching what?

We'll be caching HTTP responses. This means the actual output from your webserver that's sent to the browser. Not things that happen on the server, such as database queries.

Cache for those things should still be implemented for this to be effective. Yes, you can — to a certain extent — use response caching as a general solution to server performance issues, but you should in no cases depend on it. Response caching is like a progressive enhancement. It will make your app faster in some ways, and some cases, but your app must work perfectly well without it.

Caches

First, let's go over where we want to cache our responses.

This article will cover what everyone can do, so it won't talk about webserver caching.

Browser

The browser can cache responses, making them go from ~100ms to ~1ms, since they're served from the browser's memory, and no network requests are needed.

Browser caching can, unlike CloudFlare, be used for private information too.

However, when the browser cache misses, the response will be slower than from CF.

Important piece of information: the browser caches responses based on instructions found in the Cache-Control header.

CloudFlare

CloudFlare serves as a shared cache for your customers. It's not as fast as browser caching, but it will take the response time from ~100m (optimized webserver) to ~50ms, because it serves your content from a distributed network. Meaning, users visiting from Europe will get the content from a server close to them, and so will users visiting from Southern America, or any other part of the world.

CF can't be used for caching private information (e.g. the profile page for a logged in user).

Important piece of information: CF caches responses based on its own defaults, response headers, custom page rules, and cookie presence. It can also modify the response before it gets to the browser.

The individual variables have roughly this priority:

- Custom page rules — settings that you can apply on specific routes in the CF dashboard

- CF security defaults — if a response has a cookie, it won't be cached

-

Response headers —

Cache-Control - CF performance defaults — such as optimizing images and .js files

So, based on the above, there's an obvious issue. Laravel sets cookies. Even when we're not using auth.

There used to be a way to remove them on CF for free, now there's not. So we'll need a custom middleware to remove cookies.

Before you do this, make sure you really don't need them. Also: Tools like Google Analytics will still work. CF only has an issue with Set-Cookie headers on the server, but JS scripts can set cookies themselves just fine.

The code for removing cookies, as well as all the other functionality we'll need, is at the end of the article.

Two scenarios

There are two scenarios for each request.

Either there will be an authenticated user, or it will be a guest request.

Each scenario equires a different caching strategy.

Authenticated users

The first thing you should do when you detect that a user is authenticated is setting the Cache-Control header to private. This means that the content may be cacheable (depending on the other config keys), but it definitely can't be stored in caches like CF or corporate proxies.

Now, some considerations.

Let's say that a logged in user views your landing page. The only unique thing there is that the user's name is displayed in the navbar. So, what if the user changes his username and navigates to the landing page? He'll see his old username in the navbar.

That's not ideal, but let's see what are the options.

- Cache it for a long time, don't care

- Cache it for a long time, but require validation on each request (the browser will ask the server if it has the up-to-date version)

- Don't cache at all

- Cache for a short period of time

First solution is horrible, it will make your customers wonder why the site's broken. Second solution is slightly better, but still horrible. The cache will only prevent entire responses from being transmitted, but it won't prevent the browser from meaninglessly connecting to the server.

Do a little experiment: Create a route like this:

Route::get('/cache-test', fn () => '');

And visit /cache-test in the browser. Note the response time. Now visit a page that actually transmits HTML. Note the response time.

Odds are, the response times will be basically identical.

The real overhead is not your server's performance, it's networking. It takes time to establish a connection with the server.

So if you go with a strategy that doesn't transmit HTML, but checks if the cache is up-to-date on each request, you're not solving much, and you'll have to implement a complex caching mechanism. Not good either.

Third solution is better. No caching at all. Since the previous two examples couldn't bring much value, why bother with the code complexity. Let's just not cache anything. This is the second best option of the ones listed here.

The best one is the last one: cache for a short period of time. It's the best of both worlds, with a minimal trade-off. You get everything cached, but customers won't see a month old data. Set the cache TTL to a minute or five. Page loads will be fast, and what will seem like "bugs" to customers will go unnoticed, or only noticed for a minute.

Note: If you don't have an app where the user would visit the same page multiple times in the cache TTL's timeframe (e.g. 1 minute), it doesn't make sense to cache it. At best, you'll gain nothing, and at worst, the app will look buggy for 1 minute at a time.

I recommend going with the last option (short TTL) and separating your pages into a cached group and a non-cached group (in the context of authenticated users). You don't want to cache the profile page, because if they'll update their email and still see the same one for a minute full of refreshing, they'll be confused.

But you can safely cache all marketing sites, docs, and similar pages — even if they show the customer's name in the navbar, for example. As long as they see the right information on their profile page, they won't be bothered by this, and likely won't even notice. In my opinion it even intuitively feels like "it will fix itself in a while" by the virtue of the page being fast and the profile screen having the right data.

Public pages:

Cache-Control: public, max-age=60

User dashboard:

Cache-Control: no-store, private, max-age=0

The no-store option means that it can't be stored neither in the browser nor a proxy.

Guest users

With guest users, you can cache much more liberally. In fact, you can cache everything. So do it. Cache everything both on CloudFlare and in the browser.

A small consideration: When a guest becomes an authenticated user, they might still see the cached responses for some pages.

I recommend solving this the same way as described above, for authenticated users. Just use a short TTL.

Cache-Control: public, max-age=60

Putting it all together

We'll need to make a tiny change to our application and configure CloudFlare properly.

Let's start with the code changes.

Code

Create a middleware named e.g. CacheControl. Register it in your App\Http\Kernel like this:

protected $middlewareGroups = [

'web' => [

CacheControl::class, // Last middleware out

The first middleware in is the last middleware out. And we want to affect responses right before they get sent to the browser, so we want to be the last middleware out.

Inside the middleware's handle() method, add code like this:

public function handle(Request $request, Closure $next)

{

/** @var Response $response */

$response = $next($request);

if (auth()->check() || $this->hasForms($response)) {

// Don't cache anything

$response->setCache(['private' => true, 'max_age' => 0, 's_maxage' => 0, 'no_store' => true]);

} else {

// Cache all responses for 1 minute

$response->setCache(['public' => true, 'max_age' => 60, 's_maxage' => 60]);

// Remove all cookies

foreach ($response->headers->getCookies() as $cookie) {

$response->headers->removeCookie($cookie->getName());

}

}

return $response;

}

/** @param Response|ResponseFactory $response */

protected function hasForms($response): bool

{

$content = strtolower($response->getContent());

return Str::of($content)->contains('<input type="hidden" name="_token"');

}

Let's see what's happening here:

-

If there's a user, we say that the data is

private, that it shouldn't be cached for more than 0 ms neither in the browser nor CF, and that it shouldn't be stored anywhere, at all. Some of these parts are unnecessary when the other ones are in place, but it's nicely explicit, and makes customizing it in the future easier. -

If there's a guest, we cache absolutely everything for 60 seconds. It's a

publiccache, so it's stored both on CF and in the browser. - If there's a POST form on the page, we don't cache it. CSRF tokens are an infamously annoying problem with static caching. Here we avoid it completely.

CloudFlare

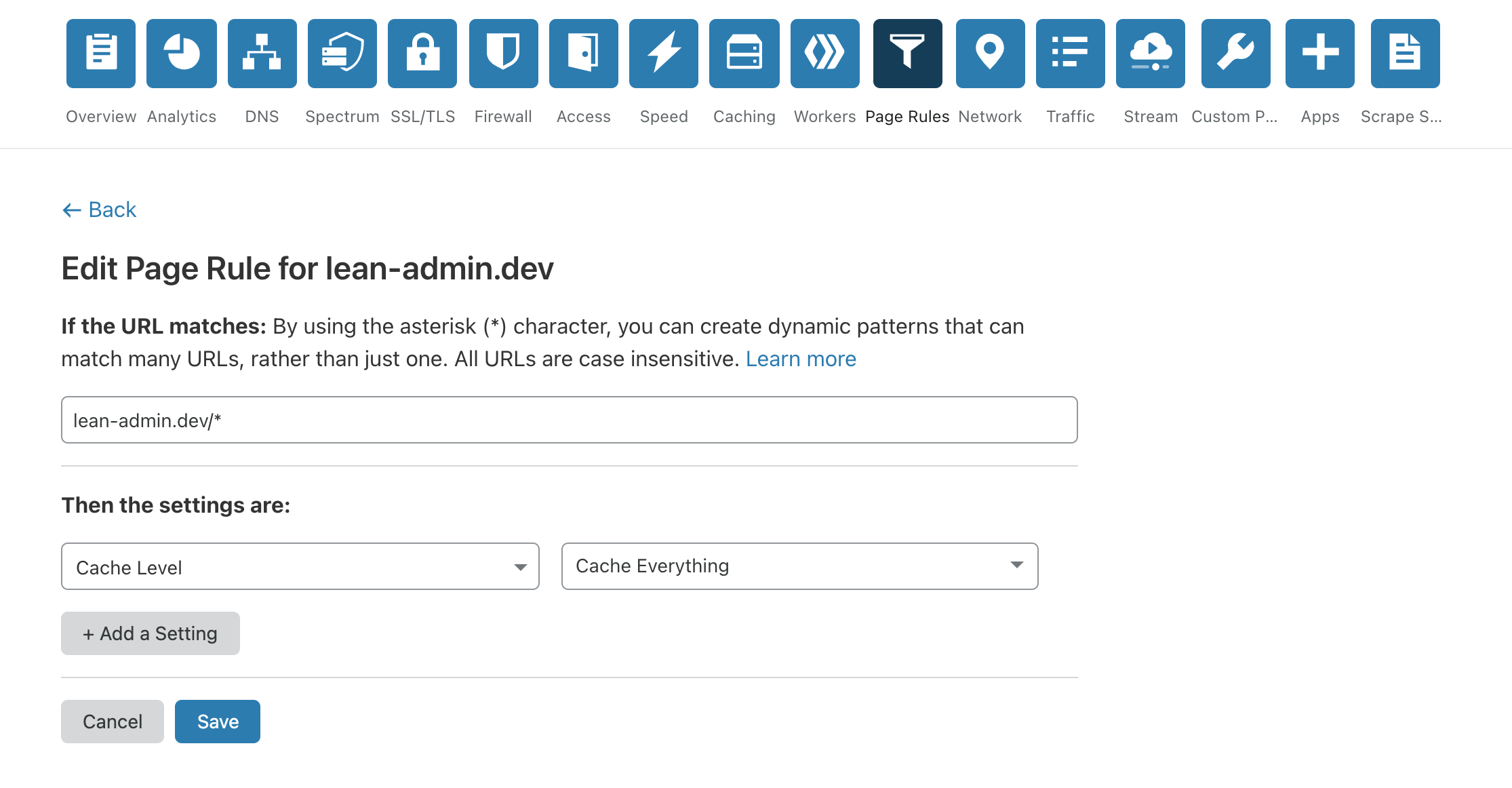

Open your site in CloudFlare (or sign up — it's free) and go to the Page Rules tab:

Create a page rule (you only need one) that sets Cache Level to Cache Everything.

You may wonder why "Cache Everything" if we only want to cache certain things. CloudFlare is a bit confusing about this, but basically to be able cache HTML responses at all, you need to set this config. That way, CloudFlare will default to caching everything, for the time specified in the Cache-Control response headers.

What about things that shouldn't be cached? If you look at the middleware code, you'll see that it always adds a cache header to the response. And the Cache Everything setting doesn't use cache if there's a private or max-age=0 or no-store value in the response. We set all of those values on some responses.

So things will work the way our server says, except no CF will actually listen, no matter the content type of our responses.

By default, CloudFlare's TTL is a few hours, you can maybe lower it below the default a bit, or even more if you have a subscription (I believe it's 1 hour for my $20/mo plan).

This means that the first visitor will hit your app, cca 150ms response time. The next visitor will hit CF, cca 50ms response time. And so will all the other visitors. When the same visitor visits the same page twice within a timeframe of one minute (e.g. switches between your landing page and pricing page), the response time will be instant — 1ms.

Bonus: Caching specific pages for authenticated users.

As I mentioned above, we may still want to cache e.g. docs, even if there's an authenticated user.

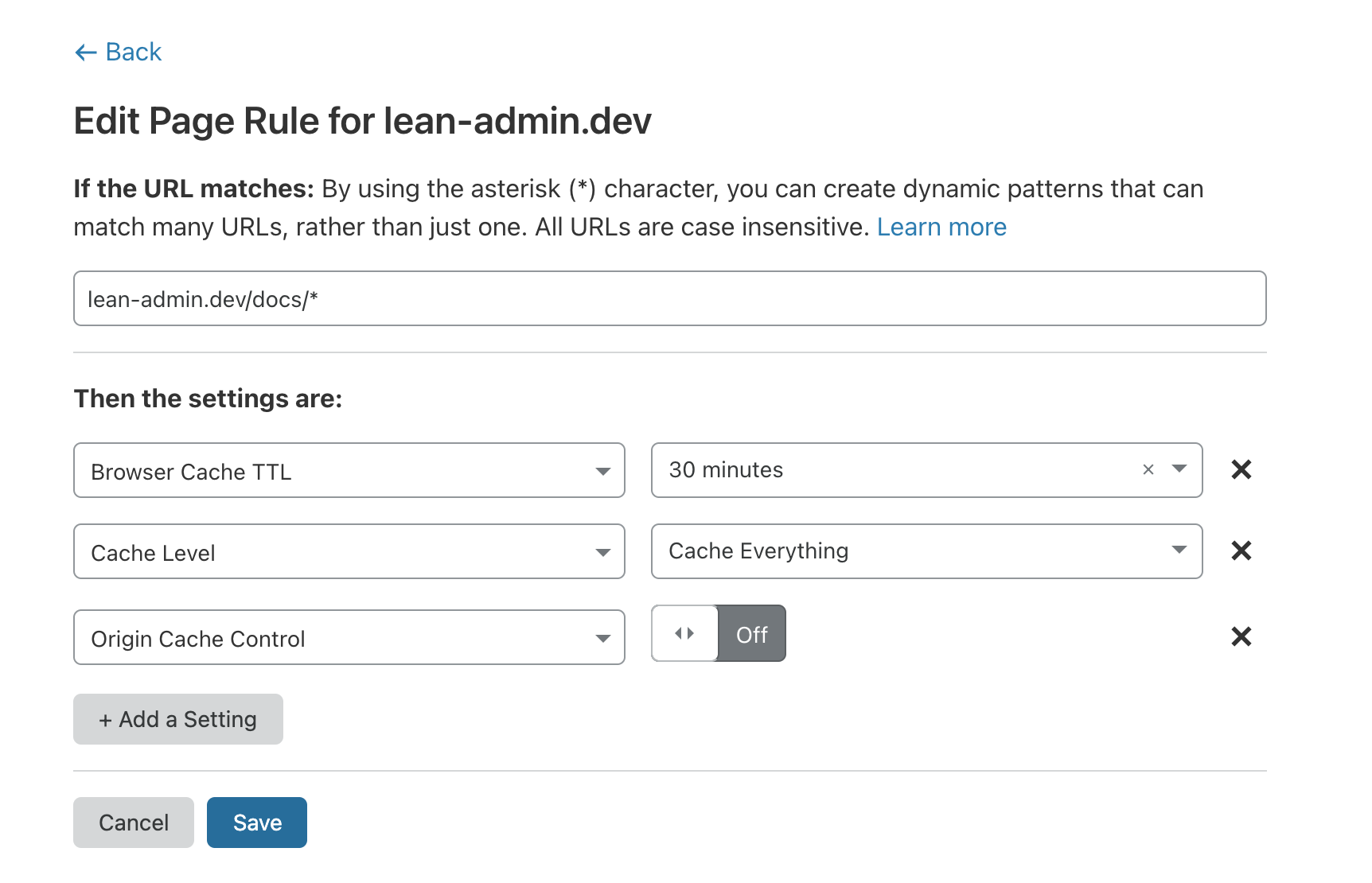

To do this, create rules for specific paths (/docs/*, /, /pricing, ...) with the following settings:

-

Cache Level:Cache Everything -

Browser Cache TTL:30 minutes(or your lowest option) -

Origin Cache Control: off

Note that we're defaulting to a disabled cache, and we're explicitly enabling it. The opposite could very easily get dangerous.

With this configuration, these pages will be cached even for authenticated users, in a way that makes the cache private to the user. We're only setting a new Cache-Control header for the browser (Browser Cache TTL), CloudFlare still gets instructions from the server.

However, this solution has downsides. We've set the browser cache TTL for /docs/* to 30 minutes, and it will be that for everyone, including guest users. Despite our 1 minute setting in the middleware.

An alternative solution would be to optionally flag routes as cacheable in your code. But that's not something I'll go through in this article because it's already long enough and frankly this use case (caching pages for authenticated users and requiring lower a TTL than whatever CF can offer) is not that common or important.

So you have to judge what solution makes sense for you. Caching is not a complex thing, but it's a thing that requires consideration.

Make the decisions and enjoy your new response times :)

Comments

Vladimir Mityukov:

... 4. Cache for a short period of time

Samuel Štancl:

Sure, when that's feasible, it's the better approach. Just requires more work, and setting up caching like in this article is very straightforward and you can forget about it once you have it done.